"Do I need a degree to do ML?" Umm...

Plus, ML's technical debt, normalizing flows, and productized services

Ear to the Ground

Excellent advice for junior data scientists: “So many people disappointed with their jobs. You need to manage your expectations, especially if you're very junior.”

Amazon puts a one-year moratorium on police use of facial-recognition technology Rekognition

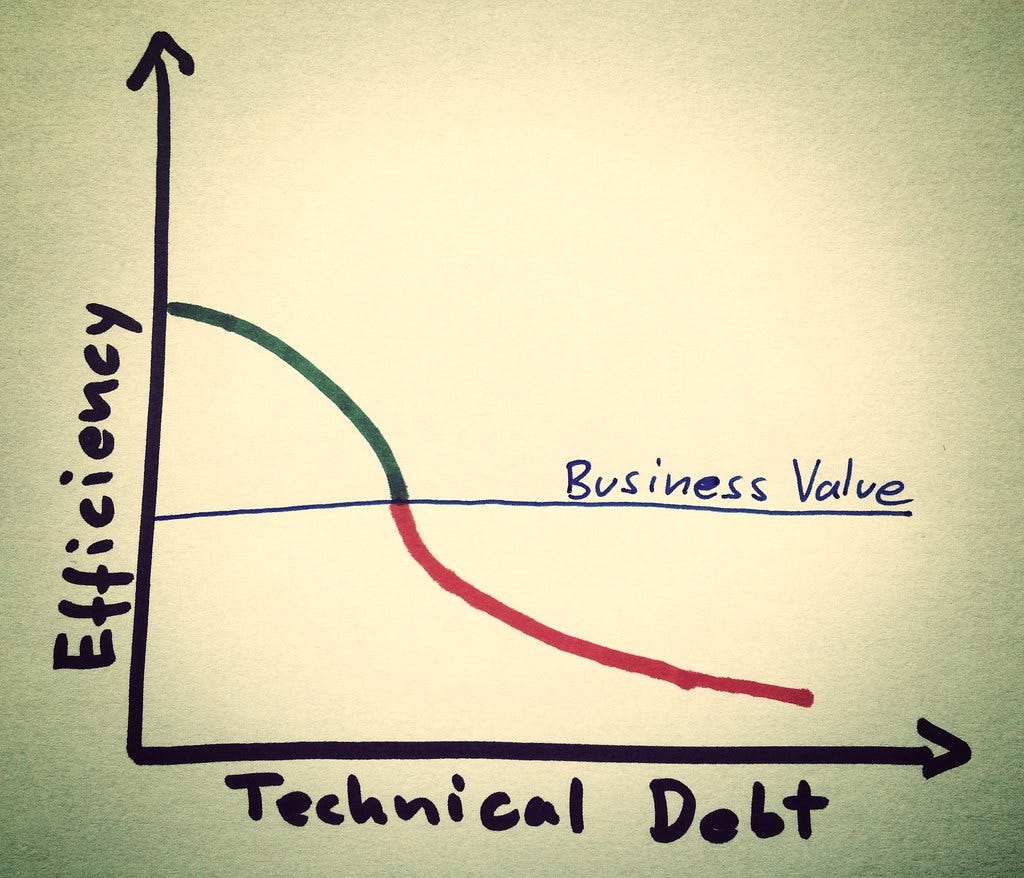

ML’s darkest secret: technical debt

The gist is that the way machine learning libraries, models, and projects tend to work conflicts with decades best practices in software engineering designed to control technical debt.

There are a few technical papers on the topic. But this blog post addresses the topic succinctly, at least from the reproducibility of notebook-based modeling. I think technical debt in deployed apps is much worse.

Avoiding technical debt with ML pipelines — maiot blog

Do I need a degree?

When I’m asked if it’s necessary to get a degree to learn machine learning or data science, I don’t how to answer. Here are a few reasons why.

The premise of the question is that grad school is a means to an end. However, I went to learn theoretical things in an academic setting because I wanted to. Career goals never really factored in. Even before starting on stats and ML, I spent time at Hopkins SAIS learning Chinese and China Studies. That language ability and China studies education have had no direct application in my machine learning career, yet it is valuable to me.

Tech has many examples of successful people who accomplished much without advanced degrees. A friend of mine named Eli is a shockingly talented machine learning researcher who only has a B.S. But I suspect Eli is smarter than me. Here are some questions my fellow millennials might find uncomfortable:

What if you need to be exceedingly sharp to succeed in this domain without credentials?

If so, what if you are not exceedingly sharp, just regular-smart? (Statistically speaking, you probably aren’t.)

What if grit isn’t enough to make up the difference?

On the other hand, the tech industry’s supposed meritocracy is at least partly bullshit. Intelligence is definitely useful, but privilege can open doors from some that would remain closed to others. Bill Gates is exceedingly sharp, but he also had rare access to computing tech when he was in high school. Moreover, if you are from an underrepresented group, lack of credentials could be used by sexists/bigots as a legitimate reason to exclude you.

Anyway, this post from Open AI’s Christopher Olah does a great job addressing all these issues.

Members-only: Productized services as a model for machine learning startups

I recently interviewed an investor in bootstrapped (read revenue-focused non-VC-funded) startups. He said this.

In the traditional VC world, "services" is a dirty word. In reality, it's a great way to bring cash into a business and fund development with customer funding rather than venture funding. We've seen a lot of interesting businesses where you start with a customer, and maybe it's not a 90% margin business, but it's 50, 60%. But you can layer a couple of those. And as you work with those customers, you're building your technology. Obviously, just make sure you have the right IP language in your contract. And with that, you're able to effectively have customers pay for development, you can use that money to hire a couple of people in the tech team to be able to scale that up, and then ultimately, flip those customer relationships over time into SaaS.

People in the AI space try to build consulting service businesses with the goal of transitioning to SaaS. Here is why this won’t work, and why productized services are a better approach.

Summer of Generative Models

This Summer, we are reading up on deep generative modeling research, with the goal of understanding their practical uses. If this is new to you, this book is a great start.

This week saw the publication of DeepFaceDrawing, a generative model that generates photo-realistic photos from sketches.

Perhaps we just need to move away from simulating images pretty white women.

This week’s reading: Flows for simultaneous manifold learning and density estimation by Johann Brehmer and Kyle Cranmer.

We introduce manifold-learning flows (M-flows), a new class of generative models that simultaneously learn the data manifold as well as a tractable probability density on that manifold. Combining aspects of normalizing flows, GANs, autoencoders, and energy-based models, they have the potential to…

If interested, I recommend starting here with Ari Seff’s excellent introduction to normalizing flows. For energy-based models, check out this video by Yann LeCunn and this thread.