Tech companies are gaming an AI sharecropper system

plus new reports on facial recognition by police

AltDeep is a newsletter focused on microtrend-spotting in data and decision science, machine learning, and AI. It is authored by Robert Osazuwa Ness, a Ph.D. machine learning engineer at an AI startup and adjunct professor at Northeastern University.

Overview:

Announcements

Ear to the Ground: AI sharecroppers, reports police use of facial recognition tech

AI Long Tail: Content generation with Frase.io

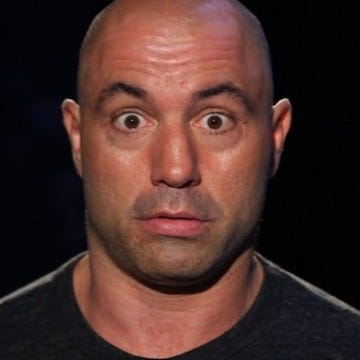

Data-Sciencing for Fun & Profit: Make your own Joe Rogan podcast episode

The Tao of Data Science: Laplace’s demon, and implications to generative machine learning

Announcements

Doing a bit of a refactor this week.

I’m doubling down on using a curated newsletter as a platform for spotting microtrends in data science and machine learning. To that end, I am removing the Essential Read section, and focusing on Ear to the Ground

Renaming Philosophy of Data Science section to The Tao of Data Science. This will be tracking a column on this topic I am publishing on Medium.

I am removing the Negative Examples. The goal of this section was to report on the phenomenon of AI hype itself, kind of a meta-AI journalism. I need to think of a better way to do this.

Renaming the AI for the Rest of Us section to AI Long Tail. I think Long Tail captures the idea that there some kind of 80-20 rule in play where big tech companies and VC investment targeting big exits accounts for around 80% (probably much more) of activity in the AI/ML/DS domain, and that things happening in the 20% are under-reported.

Changing the send day to Thursday, because a marketer told me so.

Ear to the Ground

Curated posts aimed at spotting microtrends in data science, machine learning, and AI.

The impact of the Lottery Ticket Hypothesis

The methods in a paper that introduces the Lottery Ticket Hypothesis, introduced last year and revised as recently as last month, is reportedly being adopted in large tech companies with cutting edge in-product deep learning setups. The paper shows that deep neural nets can be reduced to a sparse set of subnetworks that do most of the heavy lifting, and provides an algorithm for pruning a deep net down into a set of such subnetworks while preserving predictive performance.

Many have articulated versions of this hypothesis for quite some time, since actual biological neural networks are much sparser and much more energy efficient that a typical deep net.

How the Lottery Ticket Hypothesis is challenging everything we knew about training neural networks — Towards Data Science

The AI sharecroppers

There is a growing invisible underclass of labor in the U.S. and across the globe who painstakingly inventory and label millions of pieces of data and images. These people get bottom-of-the-barrel compensation, with one source reporting a range from $7 to $15 an hour, but that seems to be the top; in Malaysia, for example, the pay can be around $2.50 an hour.

AI companies are arbitraging the difference these low wages and the immense, long term value add of the algorithmic predictive power this labor enables.

The system looks like sharecropping — labelers get subsistence wages, AI companies are like land owners who capture all the wealth.

Police use of facial recognition is growing

Police departments throughout the U.S. are quietly rolling out facial recognition systems, despite growing attention to inaccuracy and algorithmic bias. Two reports from the Georgetown Law Center on Privacy and Technology outline both a proliferation of facial recognition systems and the haphazard way they are often used. As an example of haphazard use, the report points out a case in New York where police have actually arrested suspects after searching the software for celebrities who look like the suspect, as well as searching based on police sketches.

America under watch. Face surveillance in the United States. Georgetown Law Center on Privacy and Technology

Garbage In, Garbage Out. Face Recognition on Flawed Data. Georgetown Law Center on Privacy and Technology

AI Long Tail

In my professional life, I’ve seen lots of signal indicating that digital marketing is the domain where AI-as-a-Service hits the pavement. That said, I also hear sustained skepticism about AI from software engineers who are used to building and selling SaaS products to digital marketers. Perhaps it is because, if done well, these products don’t feel like some mystical “AI”, they feel like a solution to a specific real world problem.

This week I discovered and played around with Frase.io, a platform that provides an “AI layer for your content”. Despite this line, it doesn’t feel like AI. It feels like a tool designed to automate a digital marketer’s content creation process, namely by helping them optimize SEO as the marketer is writing content. The “AI” seems to be natural language processing that matches the content being typed to search results, and provides some automatic summarization of competing content already out there on the Web.

All-and-all, I am impressed with the product. I especially like that at $25 a month for the basic subscription, they are not depending exclusively on enterprise sales.

Data Sciencing for Fun and Profit

Make your own Joe Rogan podcast episode

Joe Rogan’s large archive of daily two hour podcasts has provided enough training data to train a truly impressive deep fake generator of his voice

A low tech Nancy Pelosi deep fake made the rounds this week as well.

Tao of Data Science

Demonic determinism in generative machine learning

Pierre-Simon Laplace supposed that everything is composed of atoms and that the motions of atoms are governed by the Newtonion physics.

As a thought experiment, Laplace imagined a kind of hyper-clairvoyant demon:

The demon knows the initial conditions of all the positions and velocities of all the particles in the universe;

The demon knows all the (Newtonian) laws of physics.

Laplace argues that given this knowledge, the demon could perfectly predict the future position and dynamics of all the physical bodies within the universe, including you. It does this by starting with the initial conditions and projecting forward using the deterministic laws of physics.

Building determinism into probabilistic generative models. Probabilistic generative models model uncertainty with probability. Recall the apple that fell upon Newton’s head and inspired his conception of physics. The following is a probabilistic model of the process of weighing that apple.